CheatGPT*: some thoughts on AI and assignments

About the author

Richard Bailey Hon FCIPR is editor of PR Academy's PR Place Insights. He teaches and assesses undergraduate, postgraduate and professional students.

Let’s start with an easy question.

In a world of generative AI, who is the smartest person in the room? It’s not a trick question: the machine isn’t a person and isn’t yet all that smart. These are early days.

The smartest person is surely the one who can write better prompts and know how to use AI as an assistant, as Philippe Borremans shows in his ebook Mastering Crisis Communication with ChatGPT.

The least smart person is the one living in denial. I’ll let you decide if that’s more likely to be a lecturer or a student.

That’s my response. But what’s ChatGPT’s answer to the same question?

So how did I do? How did it do?

(For the uninitiated, I logged into Open AI’s ChatGPT for free using my Google account details. You may have to wait a while as the system can often be overloaded, but when it’s running you get your response in seconds. To spell it out, there are no more barriers to using this tool than there are to making a Google search or using Wikipedia. There’s also a more powerful paid-for version, ChatGPT 4.)

Cue moral panic among educators. I’ll spell it out.

Historically, an academic essay has been a hard assignment for students, but an easy one for academics to assess. Take this example from the public relations scholarly literature.

Write a critique of the Four Models of Public Relations (Grunig & Hunt 1984)

It’s a hard assignment for students who are new to the discipline as it requires them to delve back through 40 years of academic literature to understand where and why the four models originated and to explore how others have responded to this much-debated source. It involves much heavy lifting and grappling with some sophisticated arguments. It also involves applying whichever referencing convention is demanded by the institution.

Much easier to copy. I’m not openly advocating cheating, just making the sensible point that it’s easier to selectively copy the references from a trusted source than it is to reinvent the wheel. But this also makes the point that identifying an academic offence (ie cheating) is rarely a simple binary question, but involves some acute judgement over sourcing and citations.

Meanwhile, the same essay is easy to mark because the lecturer setting it will be familiar with the main arguments in the literature so will quickly be able to reward students for identifying relevant sources, summarising their arguments and correctly citing them.

That’s how it’s been up to now.

I asked ChatGPT to write a 1500 word critique of the Four Models of Public Relations and within seconds this is what it produced. I’ll provide some commentary on key sections of the chatbot’s response.

Commentary: This is a sound opening. There is nothing wrong here. While the key source is referenced, there’s no citation offered (eg Grunig & Hunt 1984). I note the use of international English (analyze). Again, that’s not wrong, but it may provide a clue as to the source.

The ChatGPT response goes on to describe the Four Models (from press agenty/publicity to two-way symmetric). Again, there’s nothing wrong though this passage is rather basic for a critical essay in that it describes rather than critiques each of the models.

Commentary: It’s a sensible – and surprisingly often overlooked – observation that 1984 was a different age, not least because of the subsequent emergence of the internet. So the rather bland argument presented here could certainly have been developed. Nor is there any discussion of the power imbalances that make the ideal two-way symmetric model in reality so impracticable, a key point made by critical scholars. So why are eg Pieczka or L’Etang not cited here?

Commentary: This is a sensible, if bland, conclusion. ‘The two-way symmetrical model offers an idealized vision of public relations but may be challenging to implement in practice.’ This is surely the key point, one worthy of further exploration. Where has the two-way symmetric model ever been applied? What are the challenges to implementing it? One is the the power imbalance between publics and organisations; the other is the internal power dynamic. Bosses usually deploy public relations in order to win an argument. Symmetry implies a willingness to strategically lose some arguments.

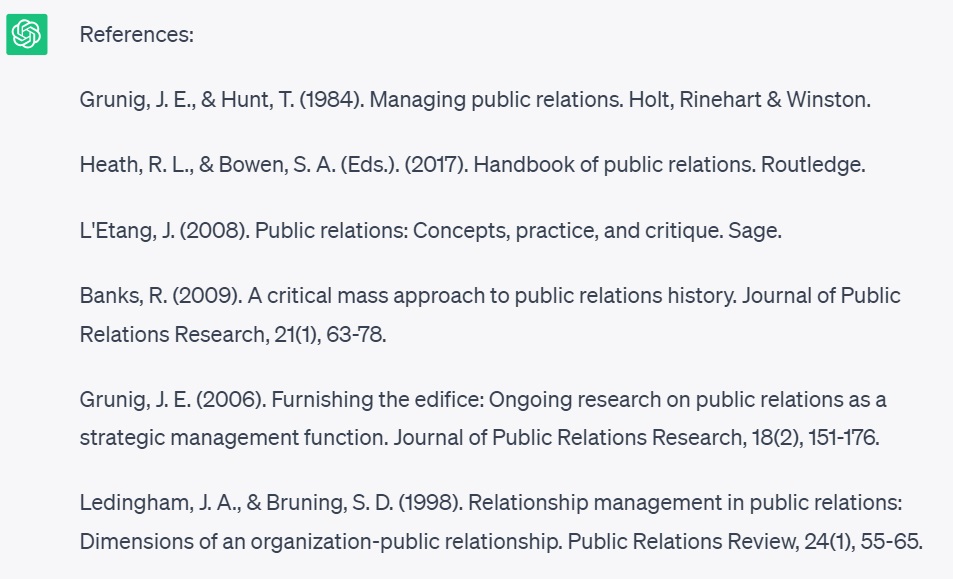

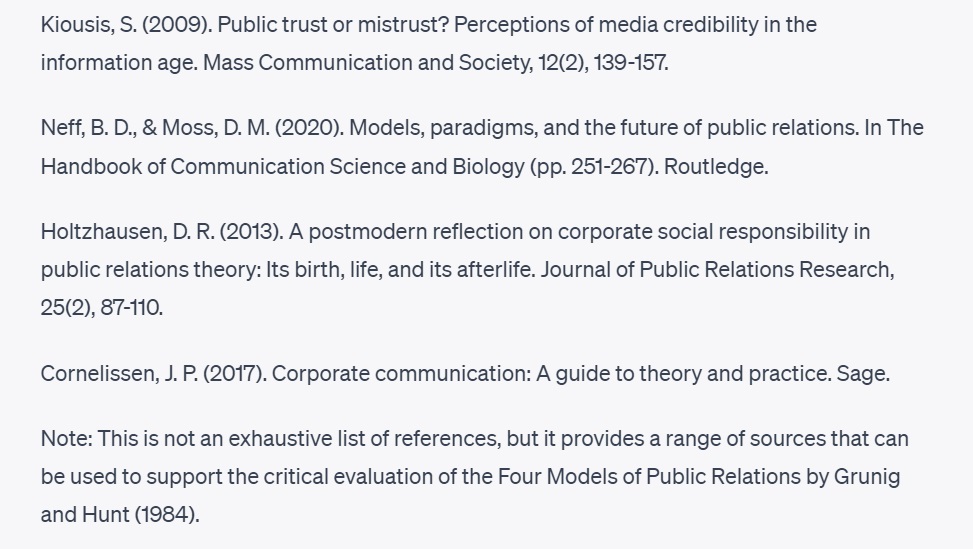

My main critique of this mini essay is not its summary of the argument, but its lack of sources. This would make it of limited use to a student. But it was a matter of seconds to ask ChatGPT to generate the references.

Commentary: This is useful, and potentially a huge time-saver for students. These are sound sources for this essay spanning four decades of scholarship (the most recent citation is to a 2020 publication; it’s common knowledge that ChatGPT draws on sources only up to late 2021).

(To confirm this I asked for the name of the UK prime minster and the response raises a smile: ‘As of my knowledge cutoff in September 2021, the Prime Minister of the United Kingdom is Alexander Boris de Pfeffel Johnson, commonly known as Boris Johnson. However, please note that political positions can change, and it is always recommended to verify the current Prime Minister by referring to up-to-date sources.’ Pity the poor confused patient asked to name the prime minister during Liz Truss’s short stay in 10 Downing Street.)

Commentary: There are more good sources here, and I welcome seeing one that’s new to me among other familiar names. I also welcome the explanatory note provided by ChatGPT.

It’s a help, but it’s not a ready-made essay. It’s ChatGPT not CheatGPT* – see note below.

How are educators to respond?

I’ve sensed a knee-jerk reaction among some that reminds me of the reaction to the arrival of Wikipedia two decades ago. Faced with this new, accessible source of all human knowledge, the message from many educators was that Wikipedia was an unreliable source that should not be cited. Since this was a rather complex message to communicate, the simpler and more memorable version that stuck was that Wikipedia was banned.

Rather than banning ChatGPT, the first instinct among many educators has been to up their plagiarism detection game. Turnitin is a favoured tool in this game of cat-and-mouse. Historically it has scanned a student submission for evidence of copying. A high similarity score may indicate plagiarism – but it may not.

But what about students paying a commercial essay writer to produce an essay for them? This would not be detectable by Turnitin assuming the essay writer produced something original (as would surely be part of the commercial deal). But it would certainly be an academic offence (ie cheating).

I’ve seen suspected examples of this and they are detectable to the human eye if not to Turnitin. That’s because a lucid essay based on sophisticated concepts may not pass the sniff test. It may cite some fashionable postmodernist French philosophers, say, but overlook the mostly American academics who are canonical in the public relations literature (see above). It’s not proof that an essay writer has been employed, but it’s an indication there may be something worth investigating.

Turnitin now offers a tool that claims to detect text written in the style of generative AI (humans should be able to do this too: I twice described ChatGPT’s text as ‘bland’ earlier). That may help, but at best it gives you a suspect, not a conviction. And nor does it answer my opening question: who is the smartest person in the room?

Because if we can no more ban ChatGPT than we can ban Wikipedia, what value is there to assessing basic knowledge or standard essay writing?

Higher education should aim higher.

It’s in this context that I welcome the AI in education policy paper published this summer by the Russell Group of research universities. It emphasises the need to support faculty and students in the ethical use of AI (no talk of a ban!) and is based around five principles:

- Universities will support students and staff to become AI-literate.

- Staff should be equipped to support students to use generative AI tools effectively and appropriately in their learning experience.

- Universities will adapt teaching and assessment to incorporate the ethical use of generative AI and support equal access.

- Universities will ensure academic rigour and integrity is upheld.

- Universities will work collaboratively to share best practice as the technology and its application in education evolves.

This surely has to be the right approach. Rather than treating generative AI as a problem to be tackled, why not view it as an educational opportunity that should challenge faculty and students to be better, to show that humans still have the edge?

So rather than relying on detecting evidence of cheating after the event, how about taking preventative steps? This should start with the assignments we’re setting. How can we make these less easy for AI and still appropriately challenging for students?

One clue is that September 2021 cutoff identified earlier – though this may be a short-lived window. Can we set contemporary challenges and rely less on backward-looking essays? This should be possible in our discipline, public relations, but will be more challenging in subject areas such as English Literature or History.

We should also shift the assessment emphasis from marking a product of learning to grading the process of learning. After all, a public relations student will never again be expected to write an academic essay in their professional working life, whereas mastery of the process of deriving insight from data will be endlessly useful.

How does this work? You could set a ‘wicked problem’ such as solving the climate emergency. As a wicked problem, it defies a simple answer. So you don’t assess the answer. You assess the student (or group of students) on the approach they’re proposing to addressing the problem. Have they considered involving climate scientists as well as communicators on the team? Have they given sufficient weight to public policy as well as public communication? Have they learnt from what’s gone before?

If that’s too big and bold, how about this approach that already works well (and has done so for years before ChatGPT emerged)? The CIPR professional assignments give equal weighting to the product and the process. And the product is not an off-the-shelf academic essay, but rather a bespoke problem-solving assignment (such as an evidence-based proposal to senior management). Based as they are in the candidate’s real work, these are hard (if not impossible) to generate using AI tools.

The reflective assignment demands evidence of how the individual conducted their research and used learning and insight to solve the problem. It demands reflection on their development as a professional and their response to the ethical challenges they faced. Generative AI may be able to help at the margins, but this level of personal reflection is beyond the ‘bland’, generic responses currently provided.

If all else fails, we could revert to closed book exams – but why go back when we should be moving forward?

Better would be to assess students viva voce style about their previously submitted work. In this way the work would be assessed but the real challenge would be to the student’s understanding of the work (process over product) in a live interview. There are problems with this approach, not least the resource implication. I’ve just assessed 60 students working individually on an MA module. You’d need two members of the team on an interview panel as there needs to be evidence of marking and moderation. How long do you need to interview each student and discuss and write up the feedback? Let’s say 30 minutes per student. That’s 30 hours (or four 7.5 hour days) to be accommodated and 60 staff hours to be funded. And what’s to stop those who went through the door early providing tips to those coming later on the line of questioning? So it’s not exactly a level playing field.

Realistically, the viva voce will stay for research degrees, but will be impractical for taught degrees.

I’ve kept my best suggestion for last. All assignments should be new and different each year (so there’s no bank of copyable work). The CIPR assignments make this explicit by asking the candidate to identify an organisation and find a problem it’s facing. The work is forward-looking and not reliant on historic sources.

Unfortunately, this isn’t going to happen in universities for governance reasons. Universities are scrutinised for their documented quality processes. This means that all the module and assessment paperwork has to be aligned. Was the assignment the same one as promised in the previous documentation? Was it assessed against the criteria provided in advance to students? Is there a paper trail showing internal and external moderation of assignments before the module was taught as well as after the assignments were submitted? (I’ve worked in a handful of universities and have been an external examiner at three others: my comments are general to the sector, not specific to any one institution.)

A well-meaning educator updating their assignments each time round would therefore be in breach of quality procedures unless they were willing to spend as long on admin paperwork as in teaching delivery. So in an Orwellian twist, an emphasis on quality results in its exact opposite. The system requires repetition rather than originality, which leads to the very problem this article has sought to address.

I confess this contradiction at the heart of academic life upsets me. More adaptable colleagues than me find it easier to square the quality-delivery circle. Rather in the style of a religious dissenter wanting to avoid the Inquisition, they profess their support for the true faith in public while going their own way in private. As long as the documentation is in order, no questions will be asked.

I started with this question: In a world of generative AI, who is the smartest person in the room?

For these reasons, I’m not willing to cast my vote for universities and lecturers – though I welcome the sensible approach proposed in the Russell Group policy paper (and applaud this piece by a smart learning technologist: Yes, AI could profoundly disrupt education. But maybe that’s not a bad thing). Nor am I willing to condemn students for using the tools available to them as long as they treat AI as an assistant rather than as an author.

But given the ability of generative AI to pick the low-hanging fruit (essays, say, or news releases), professionals and graduates are both being challenged to set their sights higher.

In place of providing simple answers, we need to ask smarter questions. The devil’s in the data.

Those are my thoughts, from the perspective of a lecturer. What do students think?

Jack Cameron-Dolan is an untypically tech-savvy undergraduate. He expresses frustration that generative AI might undermine his achievements.

‘As a student who has just gone through three years of essay writing, I think programs like ChatGPT and their ability to pump out thousands essays in an instant does pose an interesting question towards our education system. Are essays/huge pieces of academic literature a valuable use of our time?

‘Why bother spending months hand crafting a dissertation when a non-sentient computer program can do it all in seconds?’

Prakriti Roy is a current Master’s student and our #CreatorAwards23 winner. She adds:

‘AI technologies like ChatGPT can be amazing tools if used efficiently. There are tools that can summarise your thoughts as you think aloud, some that can rewrite things for you, various presentation tools, and so on. I also do not believe that ChatGPT can write critical and analytical essays with original thought, but it is quite dynamic and is learning and improving every day, so I might be wrong about that!

‘That being said, relying entirely on AI to complete your assessments seems counterproductive to me. We go to university to learn skills that we can use in actual jobs, and we pay a lot for these degrees! The assessments are designed to test the skills learned on the courses. If students don’t actually do the assignments themselves, how would they test their skills? I wonder what impact it will have on their employability.’

Note on my use of *CheatGPT. I was at first pleased with this play on words, so I checked to see if my idea was original. It wasn’t – but in a way that tells the story even more powerfully. Cheatgpt.app is the shameless name for a commercial app billed as ‘your ChatGPT-4 study assistant with superpowers’. You’ll understand why I’m not linking to it. The cat has been let out of the bag, and educators are now playing catch-up.